Lab Blog

In the works |

Analysis |

Images |

Science |

Experiments |

Organization |

Oct 11, 2015

|

On reviewing - part 1However, the experiences I have gathered from the admittedly few (total of 26) manuscripts I reviewed (or co-reviewed) may be of some interest for people, and may teach you a thing or two about what to expect as a reviewer. Most of the articles I got were from low to medium impact factor journals. The calculated impact factor average is at approximately 7 - right around where the Journal of Cell Science (IF of 5.4), Plos Genetics (IF 7.5), or Circulation Heart Failure (IF 6.7) would be. I recommended acceptance of the manuscript in 73% of the cases. However, the final acceptance rate was at approximately 57% (some of the manuscripts I reviewed are still in the process). Most of the time when I rejected a manuscript it was due to problems with the figures and/or methodology. Indeed, about 15% of manuscripts had severe problems with one or more in their figures, some of which were bordering on the line of deception and maybe even fraud. Finding problems with figures is actually something you become sensitized to these days, especially thanks to blogs like Retraction Watch or Pub Peer. And I am not even particularly looking into potential cases of text plagiarism, but rather hope that journals have and use sophisticated detection software. A 15% rate of manuscripts that I receive where there is evidence for mistaken or willful figure manipulation and deception may seem somewhat high, considering that 'problematic' manuscripts (those that end up being corrected - or even worse - retracted) make up a very small percentage of the published science out there. Looking at my experience, one cannot underestimate the importance of the job that (volunteer!) reviewers, but also journal editors and staff have to do to assure that manuscripts are worthy of publication, and that major 'flaws' are detected before the manuscripts hits the press, or is being officially published. Here are some (hopefully well enough anonymized) examples that I encountered. Example 1: I found that one only in the second revision of the manuscript. I nearly overlooked it, as only part of the graphs were identical. This manuscript came from a lab that usually publishes very good science. Hence I put it in the genuine mistake section, and suggested acceptance of the script once all my concerns have been addressed. The manuscript got eventually published.

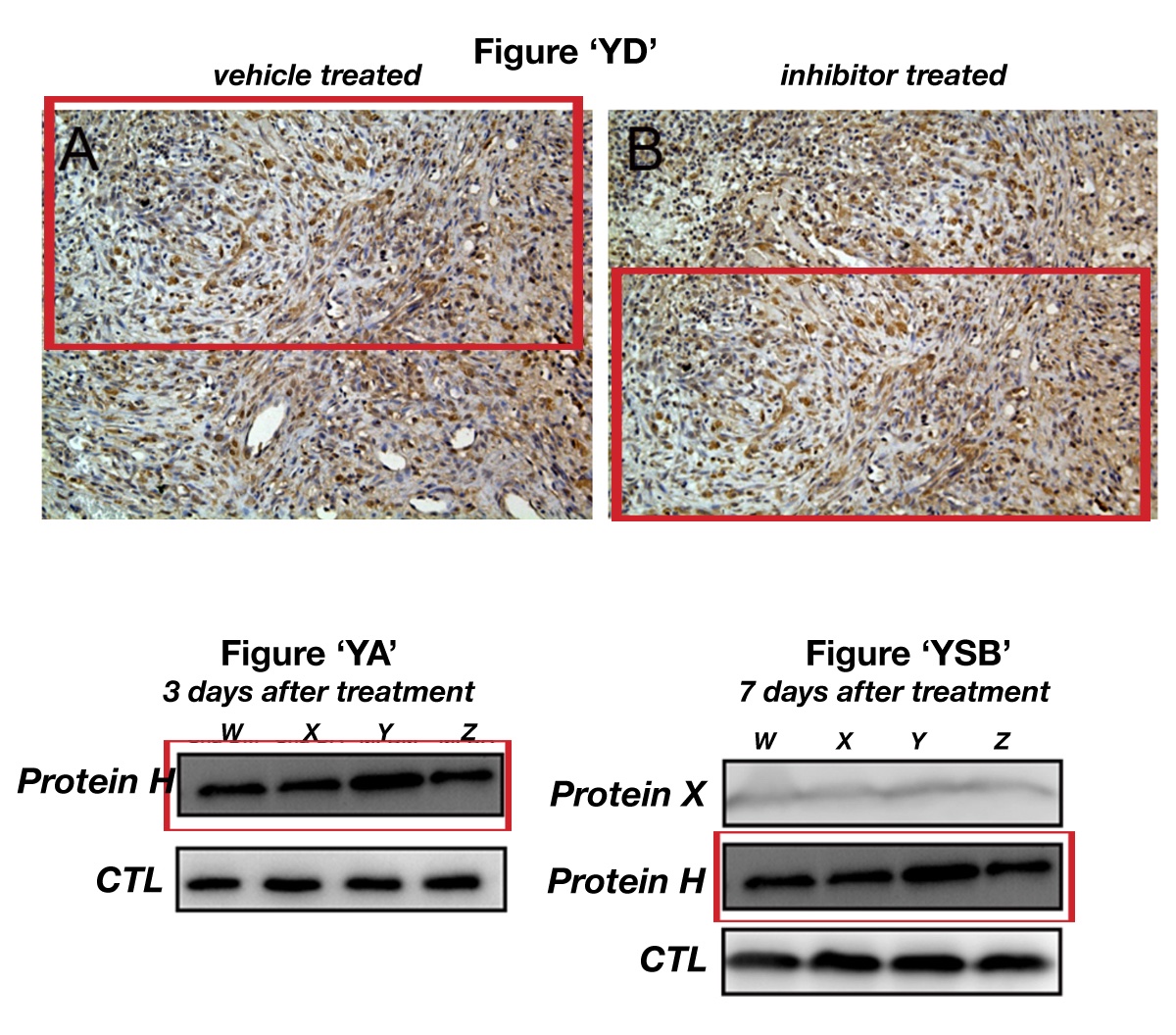

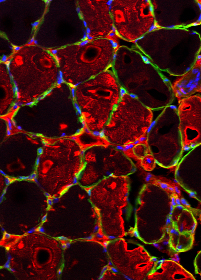

Exmaple 2: This manuscript had several shortcomings in the figures, two of which I highlight here. The first one is that some histology images that were supposed to be from two different animals - one vehicle treated control (A) and one treated with inhibitor (B). However, the two images came rather obviously from the same specimen. One more strike was a blot, which was supposed to show changes to a protein "H" 3 days after 'treatment' but was also used to investigate changes to the same protein 7 days after treatment in a different figure. This manuscript was ultimately rejected.

Example 3: This is the worst example of a 'bad' manuscript I encountered thus far. Here several of the figures used the same controls over and over again. There is the repeated re-use of a rather strange looking loading control. A close look at the data also revealed the apparent re-use of some bands for an entirely different experiment - after cropping another band away and then flipping the image over. Similar to the manuscript in example 2, also this one was rejected by the journal.

update 11-09-15 : I enhanced the anonymization of images used for examples 2 and 3, and modified the text to remove gene and protein names. |

| Share this blogpost Tweet |

|

Please remember the disclaimer. |

Section 'Sub' Navigation: